Let’s assume we have a typical ASP.NET Web Api back-end and a Single Page Application front-end. The front-end is authenticated with JWT tokens. The problem is, the Hangfire dashboard is a classic ASP.NET MVC-like application (more precisely, Razor Pages application) and will not seamlessly integrate with the existing JWT token authentication approach used in the back-end Web Api.

I came up with the following solution: let’s create a new MVC endpoint authenticated using existing attributes, but with token included in the URL. Then use a browser’s session to communicate with Hangfire Dashboard and mark a request as authenticated, if it is such. A user accesses the dashboard by navigating to the new endpoint, then if authentication succeeds, they are redirected into the main dashboard URL which is authenticated just by a flag set in the session. The biggest advantage of this solution is it requires no changes in the existing authentication and authorization mechanisms.

-

Create a new MVC controller in the back-end Web Api application. Use whatever authorization techniques and attributes are already used in the application for the API controllers

-

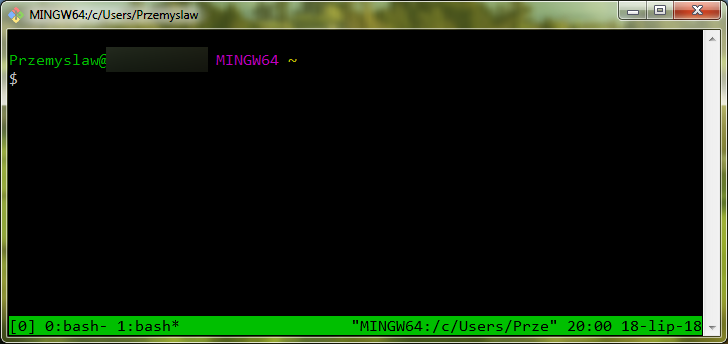

Enable the session mechanism. It may look strange for the Web Api, but anyways. Call

app.UseSessionbeforeapp.UseMvc - In the controller’s action set some flag in the session. This way, it will be set if, and only if the authentication and authorization succeeds

- Do the redirect to the Hangfire Dashboard endpoint

-

Create a class that implements

IDashboardAuthorizationFilter. It is the customization for the authentication of Hangfire Dashboard. Try to read the flag from the session and decide if the request is authenticatedUse

Authorization = new[] { new HangfireAuthorizationFilter() }in theDashboardOptions -

Now the most important part that enables the existing token-based authentication to work with the Hangfire Dashboard. Create new middleware class that rewrites the token from the URL into the headers. It will allow the existing authentication mechanism do its job without any modifications

Call

app.UseMiddleware<TokenFromUrlMiddleware>beforeapp.UseAuthentication.

Though, there are some caveats for this solution.

- The solution may require some additional session setup in multi-instance back-end configuration. By default, the session is stored in memory. Each instance will have its own copy of the session store causing the session flag to be unrecognizable between the instances, if it is not configured to use a distributed cache like e.g. Redis

- The security of the token included in the URL is disputable. However, as for my architectural drivers, it is acceptable, because the application is internal

-

There are some rough edges if the application is hosted in a virtual subdirectory of the domain using Kestrel, not IIS. Please notice the Redirect action begins with

/which is the root of the domain. We must adjust it accordingly if a subdirectory approach is used (append the subdirectory name). Also, we must somehow inform Hangfire about the subdirectory. If a subdirectory is used, then the real URL of the dashboard is not the main Hangfire path set in its options, but actually the path with prefixed with a subdirectory. The dashboard is a black box from our point of view, and we cannot influence the way it makes its own HTTP requests. The only way of configuring its behavior is by using its apis. We usePrefixPathproperty of theDashboardOptionsto configure this -

In my setup I also had to use

IgnoreAntiforgeryToken = truebecause of some errors which occurred only on the containerized environment under Kubernetes. The final settings are as follows: - Due to the discrepancies between containerized environment and local one, it is worth considering the separate, conditionally compiled setup calls for the DEBUG local build and the RELEASE build. This way we can skip the prefixes required for the subdirectory based hosting if we run locally

-

There in an interesting SO post describing the differences between the

PathandPathBaseproperties of theHttpRequest. These are used internally by the Hangfire to dynamically generate URLs for the requests sent by the dashboard. It turns out that, these properties are used to detect the subdirectory part of the URL. They behave differently under IIS and Kestrel, unless the particular middleware is additionally plugged into the pipeline - By default, the session cookie expires 20 minutes after closing the browser’s tab or right after closing the entire browser

- One can imagine a very unlikely corner case, when the real token is invalidated while the Hangfire Session in open. In such case, the dashboard will remain logged in. I consider those properties as acceptable, though